The Problem

Recently I had a bit of time on my hands and was looking for an interesting problem to play with. A friend suggested OCR for musical scores as he didn't find any useful software when he needed it a few years back. The motivation was that sites like CPDL contain scores for a lot of music, but only very few of them are available in an open, machine readable format like MusicXML.

The solution

Luckily a internet search yielded Audiveris, an open source tool, whose soon-to-be-available version 5 is supposed to work much better than previous versions even on scanned scores.

Ok, now I can goof around

As the problem is already solved, I decided to learn about and play with Deep Learning, the arsenal of methods that fuels a lot of the current AI applications like image detection, image captioning, machine translation, self driving cars, playing go.

I want to try to apply these methods to music OCR. I have no high expectations for the resulting system. But I have expectations on my learning. I prefer learning with a concrete problem at hand, this makes it easier to dive deep into the literature, while learning without a problem tends to stay near the easy introduction surface.

I hope that this will be the first in a series of posts that documents my journeys through the depths of deep learning, but then again I have started blog series in the past and never finished them, so no false hopes.

A big disclaimer

I am not an expert in deep learning, I have not done much in this area, so everything that follows is potentially wrong, confused, biased and the wrong words are used to talk about it. What I write seems reasonable and sufficiently consistent in the happy place that is my mind, but I wouldn't bet money that my ramblings hold up under professional scrutiny.

Training data

One of the big problems with deep learning is the availability of large quantities of high quality data. If labeled data is available, supervised learning can be used, which is well understood for many applications, but labeling large quantities by hand of data is time consuming, expensive and boring, and I am not aware of any existing music related OCR data sets suitable for my problem. (Frankly, I haven't even looked very hard, as they say "Often you can save an hour in the library by spending a few months in the lab"). So the main constraint is the data that I am able to acquire in a mostly automated fashion.

Scraping CPDL

CPDL doesn't readily index the formats in which a given score is available, so I started from the other way round.

Luckily, it turns out that their mediawiki installation allows to show a list of files, and while the forms on the website only offer a choice of a few small numbers, the endpoint accepts much larger numbers.

So I was able to download a html with a list of 5000 files uploaded to CPDL. This can be extended by offsetting the results using another url parameter, but the way the offset work was a bit annoying, so I decided that 5000 is enough for a first round.

Then I prepared a delicious and beautiful soup. Armed with the assumption that files that belong together only differ in extension I was able to extract about 280 pdfs with corresponding MusicXML files, about 20 jpeg and png images and about 1700 pdfs without corresponding xml files.

Building half a OCR system to build an OCR system

My plan is to use deep magic to build a black box which I feed images with sheet music and get some machine readable representation of the music out. To train this deep magic I need matching pairs of images and machine readable music. The only reasonable way to get something like that is to squeeze the machine readable representation out of the MusicXML files and somehow extract the image data from the corresponding pdf files.

So I wrote a script that converts the pdfs to png files by calling convert from ImageMagick, and then uses the Hough Transform from OpenCV to detect horizontal lines. Onto this I managed to pile a bit of hacked-up processing to relatively reliably identify and extract indidivual staff.

For a short while I tried to extract individual measures and individual notes, but this became to ad-hoc and fragile. While it kind of works on the staff level, robust is different, so I use the simplest possible way to deal with problems: If it looks like something didn't work, don't spend too much time on investigating causes and solutions, just skip this score and continue with the next.

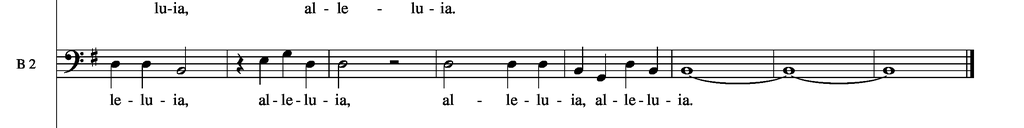

The resulting images of have a resolution of 1024x128 pixels and looks like this:

MusicXML

Next are the MusicXML files. The structure is quite straightforward, and after a bit more soup I was able to extract sequences of notes.

However there are a few problems:

- Music can get pretty complicated. There are scores where the number of staffs in a system and the voices in these staffs changes. So I tried to detect the difficult cases and skip them.

- I also skip scores with with multiple voices per staff,because this can not be modeled by a simple sequence of notes.

- I make a few bold assumptions about how to find staffs in the xml and which of the extracted image they correspond to. This requires dealing with systems, line and page breaks. So again, skip the complicated cases and the cases where the results don't match (e.g. number of staffs on a page).

The note encoding

The neural net doesn't know about music. It does know about categories, or an alphabet, but these are just sets of things, for the net they don't have any structure. So usually one maps everything onto the integers.

One has quite a bit of freedom how detailed one wants to be with the encoding of the notes. There is a tradeoff between how detailed the description is (there is a lot more information in the xml files, e.g. stem direction) and how many different types of notes to distinguish (the more accurate, the more classes, the more data required to properly train).

I chose an encoding that contains only pitch and duration. Still this leads to a quite large number of classes, so I fear that the amount of data available will not be sufficient.

I also expect difficulties with the pitch, as this is determined both by the position of the note and the clef at the beginning of the line. I would be surprised if the net picks up this relationship.

The resulting sequences look like this. I put measures on separate lines, just because I can, but I probably will not use this information.

quarterD3 quarterD3 halfB2 restquarter quarterE3 quarterG3 quarterD3 halfD3 resthalf halfD3 quarterD3 quarterD3 quarterB2 quarterG2 quarterD3 quarterB2 wholeB2 wholeB2 wholeB2

Is this good data?

I don't think so. First of all it is not much, it is only very coarse (staff level). Most of the MusicXML/PDF Pairs are typeset by Finale, so if I succeed in my quest, I will probably end up with a bad approximation of the inverse of the Finale rendering engine.

My "if-something-fails-just-exclude-it-from-the-data-set" approach is not a good idea if you care about real world performance, because you introduce even more bias, not only will the net be biased towards the kind of music that is typically put on CPDL by people that use Finale, but also towards the subset of music with only a single voice per staff and line spacing and system arrangements that didn't trip up my funky preprocessing stage. But I guess for learning about neural networks it is not too bad.

In the next post I want to report on my success with the unsupervised feature learning.